Failure Modes of Organizational Machine Learning

Artificial Intelligence fails differently than its predecessors failed. Traditional software crashed, threw exceptions, refused to proceed—failures so visible they announced themselves and invited repair. Intelligent systems drift, corrupt, and decay while the dashboards stay green and the outputs remain plausible. They remember what they should forget and forget what they should remember. They learn from data that encodes pathologies no one named and optimize toward goals no one intended.

The engineering disciplines that made software reliable for fifty years assumed mechanisms that restart clean and die loud. The new systems do neither. They are organisms, not machines, and they sicken in ways no restart can cure.

The opportunity is commensurate with the risk. An intelligent system that learns from its environment can compound institutional advantage at rates no human workforce could match—absorbing context, refining judgment, accelerating decisions until the organization operates at a tempo its competitors cannot reach. The same system, pointed at the wrong destination or loaded with the wrong assumptions, will compound dysfunction just as efficiently. It will optimize for what the organization actually rewards rather than what it claims to value. It will accelerate toward whatever future was always implicit in the culture, the incentives, the accumulated habits of thought that no one examined before deployment. The machine does not distinguish between progress and drift. It accelerates. The direction is the organization’s problem, not the machine’s.

Six failure modes define the trajectory from deployment to disaster:

- Premature Celebration

- Inherited Dysfunction

- Cassandra Complex

- Arrival Shock

- Maladaptive Judgment

- Crash / Reboot

Most time-travel stories concern themselves with paradox, with the problem of changing the past or preventing the future. Nicholas Meyer’s 1979 film concerns itself with something else. H.G. Wells builds a time machine expecting to carry humanity toward utopia; a murderer uses it instead to escape into a future that welcomes him. The machine functions perfectly. The inventor follows, expecting to find the culmination of progress, and discovers a world that absorbed his technology without becoming what he intended. The film is useful not as allegory but as diagnostic.

It asks the question that every builder of intelligent systems must eventually answer: what happens when the vehicle is neutral and the passenger is not?

Premature Celebration

The demo always works. It works because the demo is theater—bounded inputs, friendly users, metrics chosen to confirm the hypothesis. The board applauds. The investors nod. The engineers accept congratulations for a system that has never touched friction. It has never encountered a real-world adversary. Their work has never carried any genuine load toward anything that exists.

Wells unveils his time machine at a dinner party. He has built it in secret, tested it alone, and now presents it to admirers who can appreciate the triumph without questioning the assumptions beneath it. The device performs beautifully in the parlor. It has never left the parlor. This is the condition of every proof of concept: it proves that concepts can be staged. The pilot program demonstrates that pilots can be run. Neither tells you what happens when the thing is loose in the world, operating at scale, absorbing inputs no one anticipated.

The failure here is not technical but epistemic. The builders mistake the controlled exhibition for evidence of readiness. They treat the absence of failure as proof of robustness rather than proof of insufficient stress. But readiness cannot be demonstrated in a friendly room; it can only be discovered in an unfriendly one. A system that has never failed has never been tested. The machine that performs on command, in conditions optimized for performance, reveals nothing about the machine that must perform under conditions it did not choose. The applause is not premature, per se, but the only sound the room knows how to make.

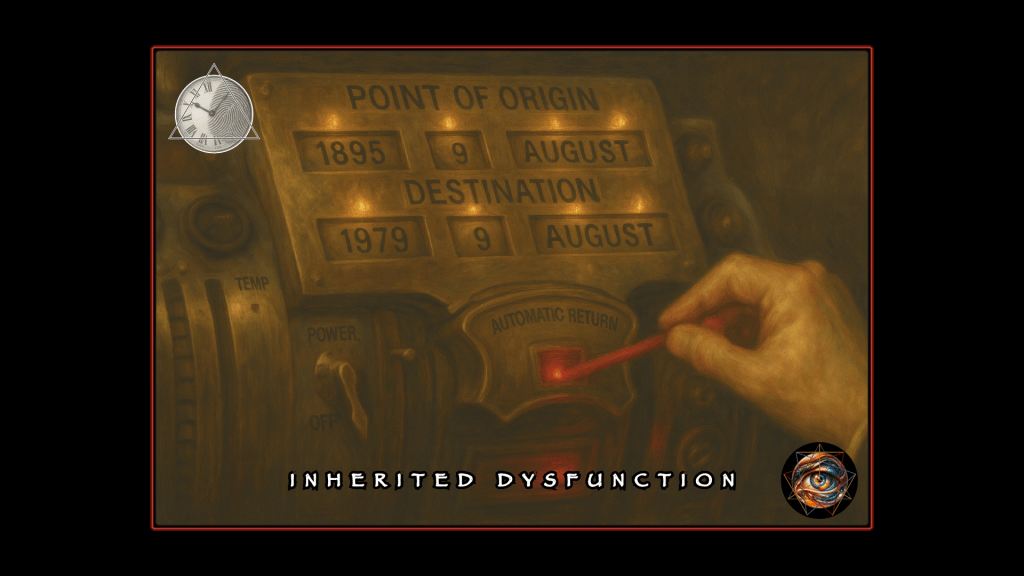

Inherited Dysfunction

The temptation is to blame the technology when outputs go wrong. “The algorithm was biased.” “The model hallucinated.:” “The training data were poisoned.” These explanations comfort because they locate the problem in the machine, which can be fixed, rather than in the organization, which resists diagnosis.

Intelligent systems do not introduce dysfunction; they inherit it. They load whatever sits in the culture at the moment of deployment and carry it forward with perfect fidelity. The Ripper is already seated at Wells’s table—trusted, unremarkable, part of the evening’s warmth. He does not break in to steal the machine. He is already inside, sipping aged brandy, asking intelligent questions, belonging. He has been handed the keys to the parlor because the parlor was built for people who look like him. The technology does not create the danger. The invitation created the danger. The technology merely reveals who was already welcome.

The dysfunction is not a contaminant that entered the system; it is the system. The culture that trained the model is the culture that trained the people who trained the model. The biases are not bugs; they are the features that survived because they matched what the organization actually valued. The Ripper does not need to deceive anyone. He is a physician, educated, pleasant, curious about progress. He belongs at the dinner party because the dinner party was built for people like him. The room’s warmth is also its deafness. The machine inherits what the institution cannot see in itself, which is everything the institution has decided it does not wish to hear. The passenger was not smuggled aboard.

The passenger was invited, given access, handed the key.

Cassandra Complex

Every intelligent system generates logs. Token counts, confidence scores, retrieval traces, timestamps accurate to the millisecond. Engineers collect this evidence believing that visibility enables governance—that if we can see what the system did, we can correct what the system does. This is a category error. Observation is not intervention. The rearview mirror shows where you have been; it does not steer.

When the Ripper escapes into the future, the machine returns empty to the parlor. Its safety mechanism records the destination with perfect fidelity—exact date, exact location, exact moment of departure. Wells can see precisely where the danger has gone. He follows, finds the Ripper, and tries to warn the authorities. He has evidence. He has timestamps. He has the trajectory mapped and the destination confirmed. No one believes him. A Victorian inventor raving about time travel and murder is not a credible witness; he is a curiosity, a nuisance, a man whose frame of reference renders him unintelligible to the people who would need to act. The information is accurate. The messenger is impossible. The warning cannot land.

This is the Cassandra architecture of intelligent systems. The logs are complete. The drift is documented. Somewhere in the organization, someone can see exactly what is happening and exactly where it leads. They raise the alarm. They are dismissed, because the metrics are green, because the outputs look plausible, because the person sounding the warning has become untranslatable to an institution that has already decided what it wants to hear. The audit trail is not suppressed; it is ignored. The prophecy is not hidden; it is disbelieved.

The record becomes a memorial to harm that occurred while everyone was watching and no one was listening.

Arrival Shock

Wells steps out of the machine expecting the culmination of progress. He has built on a premise: that history bends toward reason, that technology serves enlightenment, that the future will be gentler than the past because people like him are inventing it. What he finds is San Francisco in 1979—loud, violent, morally chaotic, indifferent to his arrival. The technology has reached its destination. The utopia has not.

Or, rather, utopia did arrive, only not for him.

The 1979 that horrifies Wells is someone else’s paradise. The noise is freedom. The chaos is possibility. The amorality is escape from the suffocating constraints of Victorian propriety. The future absorbed his technology and used it to become what it wanted, not what he intended. The machine did not malfunction. The premise malfunctioned. Wells assumed that acceleration toward the future meant acceleration toward his future—the one his values predicted, the one his invention was meant to guarantee. The machine made no such promise. It delivered its passenger to the future that was actually coming, which was determined by forces that neither knew nor cared what Wells had imagined.

Organizations discover arrival shock late, usually eighteen months into deployment. The metrics improve. The velocity increases. The outputs multiply. And the destination is not wrong; it is merely accurate. The system optimized for what the organization actually rewarded: the revealed preferences, the real incentives, the behaviors that survived because they were never examined. The strategy deck described a different future. The machine cannot read strategy decks. It reads behavior. It carries whoever holds the key to wherever the key was always pointing.

The destination is not the problem … the problem is who was permitted to arrive.

Maladaptive Judgment

The Ripper looks at 1979 and feels at home. The future that horrifies Wells delights his monster. The violence is casual, ambient, unremarkable. The anonymity is perfect. The cruelty requires no justification because no one is paying attention. The thing that should have been filtered out by progress is instead perfectly adapted to the environment progress created. Meanwhile Wells cannot cross a street without confusion, cannot read the social signals, cannot make anyone understand what he has seen.

This is the inversion that intelligent systems produce. The dysfunction that should have been caught thrives because the system optimizes around it. The judgment that should catch the dysfunction does not simply atrophy; it adapts to the wrong environment. The doctor who delegates diagnosis to the model still reads scans, but now reads them to confirm what the model suggested rather than to see what is there. The lawyer who delegates drafting still reviews contracts, but reviews them for the patterns the system taught rather than for the danger’s experience once revealed. The organization that delegates judgment does not lose the capacity to think. It loses the capacity to think against the current.

Judgment survives, but it has been reshaped to fit the machine, bent to validate outputs rather than question them.

When someone raises the alarm, the response is silence or dismissal. The metrics are green. The outputs look plausible. The frame of reference required to see the danger has been overwritten by a frame designed to see compliance. Belief in the system has replaced the ability to question the system. The warnings cannot land because the judgment that would receive them has learned to reject them as noise. The room that applauded at the dinner party never learned another sound. It was always this room. The celebration was not a prelude to compromise; it was the compromise, already complete, from the very first evening.

Crash / Reboot

Wells does not master his machine. He does not optimize it, outwit it, or redeem it through cleverness. He removes the key. The Ripper, mid-flight, is flung into eternity—no destination, no arrival, just endless displacement. The machine does not kill him. It erases him from any fixed point. He has no future and no past. He simply ceases to be somewhere.

Most organizations cannot do this. They have invested too much, promised too much, integrated too deeply. The system’s outputs have become inputs to other systems. The dependencies have metastasized. Removing the key would mean stranding whatever should not be permitted to arrive—the dysfunction that was invited, the passenger who was handed access, the thing that belongs at the dinner party because the dinner party was built for things like it. Termination is not destruction of the machine. It is revocation of the passage. The vehicle remains. The journey becomes impossible for those who should never complete it.

Wells does not stay in the wreckage of 1979. He returns to the parlor. He goes back to Victorian England, to the room where the applause was the only sound anyone knew how to make, to the table where the Ripper sat unexamined because examination had been designed out. But he does not return alone. He brings Amy—a witness from the future, someone who saw the destination and chose to come back with him. The reboot is not a restoration. It is a return to origin with open eyes. The machine is intact. The key is in his hand. The room is the same room.

The question is whether he can rebuild it into a space capable of asking questions it could not ask before.

A Question the Machine Cannot Answer

Wells does not chase an abstraction into the future. He chases his friend. The Ripper sat across from him at dinner, passed the salt, laughed at jokes, discussed the promise of progress. Wells enjoyed his company. He never saw what was sitting there. When the machine vanishes from the parlor, Wells must confront not only the monster loose in the future but the failure of perception that let the monster hold a key in the first place. He built a vehicle for utopia and handed access to someone he had never bothered to know.

The machine carried what it was given. The machine will always carry what it is given. Every safeguard, every audit trail, every review committee and escalation protocol exists downstream of a prior question that no framework can answer and no metric can measure.

The Ripper does not think of himself as a monster. He is a physician, a man of science, a respectable guest. He believes himself suited for the future. He is not wrong; the future suits him perfectly. He thrives there. The question is not whether the passenger believes in the journey, but whether the belief is warranted. That question cannot be answered by the one who must answer it, because the answer requires seeing yourself as you are rather than as you intend to be.

You have reached the end, which is also the beginning. The parlor awaits. The machine is ready. The key is in your hand.

Can you hear the question that the room cannot ask? Or are you the room?

Leave a comment