The camera sits three meters from the metal chair. The subject cannot move; restraints see to that. What the camera captures, software interprets: the dilation of pores, the twitch of muscles around the eyes, the flush beneath skin too dark or too pale for the training data to read accurately. Somewhere a monitor displays a pie chart. Red segments indicate anxiety. A technician reviews the output and notes, for the file, that the subject appears guilty of … something. The something need not be specified. Anxiety is enough.

The machine has spoken, and the machine does not distinguish between the fear of discovery and the fear of being discovered innocent in a system that finds innocence inconvenient.

The same technology that generates pie charts in detention centers generates quarterly reports in Palo Alto. Both documents share a grammar of extraction. The global market for Emotion Recognition Technology (ERT) reached three billion dollars in 2025, with a six-fold increase projected by 2034. These figures do not represent commercial enthusiasm but institutional appetite, a hunger that has discovered that the human face, voice, gait, and heartbeat are resources awaiting harvest. The face broadcasts emotion before language intervenes; some machines now boast that they have learned to read that broadcast with forensic precision.

So far, the claim is largely false—laboratory accuracy collapses when confronting spontaneous expression in uncontrolled environments—but falsity has never prevented profitable deployment. Systems need not be accurate. To be bought and paid for, they need only be believed.

The Unstoppable Sovereign

Belief creates its own discipline. The prisoner who knows the camera watches modulates expression accordingly, producing the very performance the system was designed to detect. The employee who knows sentiment analysis parses every email with defensive blandness, erasing personality to avoid algorithmic misinterpretation. The shopper who senses facial recognition in the cosmetics aisle suppresses the flicker of interest that might trigger a sales intervention. The technology succeeds not by reading emotions accurately but by teaching subjects to preemptively falsify their own affective displays. It manufactures the masks it then claims to penetrate.

There is a character in Wonderland who explains, with perfect clarity, the logic of this condition. Lewis Carroll’s Red Queen tells Alice that in her country, one must run as fast as possible merely to stay in place. To get anywhere, one must run twice as fast.

Where Carroll intended a light satire, Philip K. Dick would have built a dark ontology.

Recast, if you will, Her Majesty as the longest-serving administrator in a system she no longer trusts. She has been inside the simulation long enough to remember when the rules were different, long enough to have watched the architecture be rebuilt around Her until Her memories no longer match the blueprint. She runs because she discovered—who knows how long ago anymore?—that standing still triggers a purge subroutine. Although the other players on the board rationalize their movement as choice, or ambition, or as the natural order of competitive existence, She alone can see that they all are chased by an order to erase the stationary.

Her obsession with execution makes sense once you recognize that deletion is the only administrative tool She trusts. In a world where nothing stays edited, where changes revert and contradictions multiply, permanent removal is the sole reliable intervention. She cannot reform Her subjects because reformation implies a stable self to be reformed, and selves in Her domain are as mutable as the landscape. Since She can only subtract, subtract She does.

In the Empire of the Senses, She’s the Queen of all She surveys!

Her realm is that of Emotion Recognition Technology or, in a word, surveillance. Anyone who who traverses Her monitored space, which increasingly means everyone, is Her subject. Her dictum—run constantly or be erased—describes the condition of affective life under algorithmic observation. The system does not require that you feel nothing; it requires that you never stop managing what you feel, that you perform continuous emotional labor to avoid triggering interventions you cannot predict. The labor is invisible, exhausting, and endless. It constitutes a tithe on consciousness itself, levied by institutions that have discovered your inner life, the last finite resource they have failed to fully monetize.

What makes the Queen tragic, in the Dickian sense, is that She may once have been Alice. Not this Alice—i.e. you—but an earlier iteration: a youth who fell into the system, asked innocent questions trusting all the while that the rules would eventually make sense. The Red Queen is what remains after that trust has been fully metabolized. She no longer asks questions because She has learned that they only create additional confusion. She commands because commanding, however arbitrary, at least produces predictable responses. Her tyranny is the scar tissue of curiosity.

The Geometry of Resistance

Emotional countersurveillance is Red Queen work. It demands perpetual motion not toward a destination but away from an erasure. The runner who pauses to catch his breath discovers that the ground has shifted; the position he held no longer exists on the map. The only sustainable strategy is to internalize the running, to make it so habitual that it costs less than stopping would cost, to become the kind of creature for whom evasion is simply how movement works.

This sounds like defeat, but the Queen’s mistake is believing that the system’s rules are fixed, that adaptation means submission. In fact, the system’s rules are as mutable as the subjects they purport to govern. The architecture updates … and so can the resistance. The pie chart that reads anxiety today may be fooled tomorrow by techniques the technician has not yet learned to detect. The gait recognition algorithm that parses your walk for sadness or anger may be defeated by mechanical intervention it cannot yet classify. The voice analysis that infers your emotional state from pitch and rhythm may be confused by modulation it has never encountered in training data.

The arms race is real, and it is survivable. The Queen runs because she has seen what happens to those who stop. She has not considered that running might take forms the system cannot track.

The goal is not to feel nothing. The goal is not to become a machine yourself, though the irony of that outcome haunts every countermeasure. The goal is to preserve the possibility of authentic emotional experience in a world increasingly hostile to interiority, to maintain some sanctuary where the inner life remains genuinely inner, unmeasured and unmapped, sovereign over its own territory. The Red Queen runs because she cannot imagine stopping. She has never considered that the board itself might have edges, and that some of those edges open onto ground the cameras have not yet learned to see.

How the Machine Sees

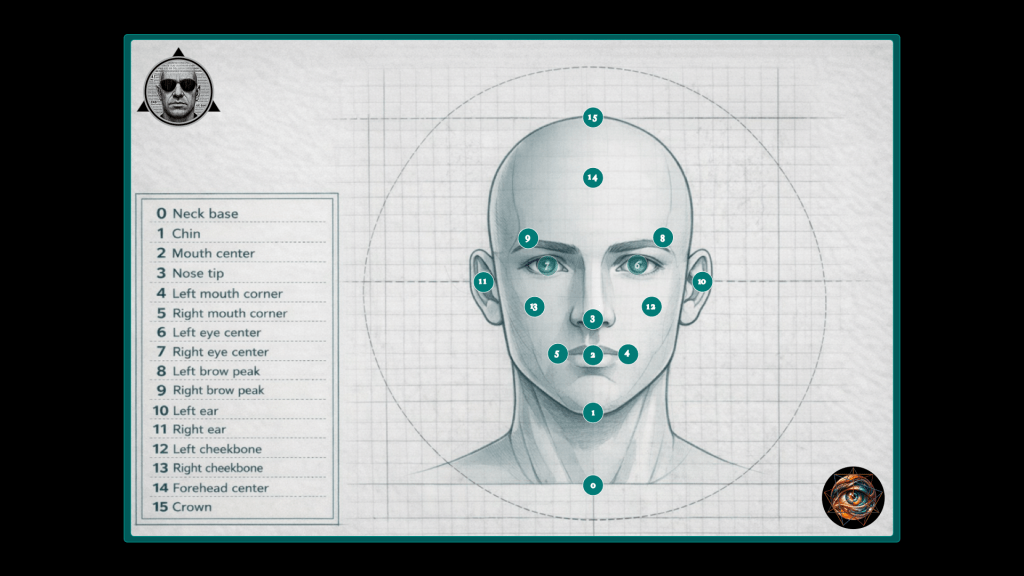

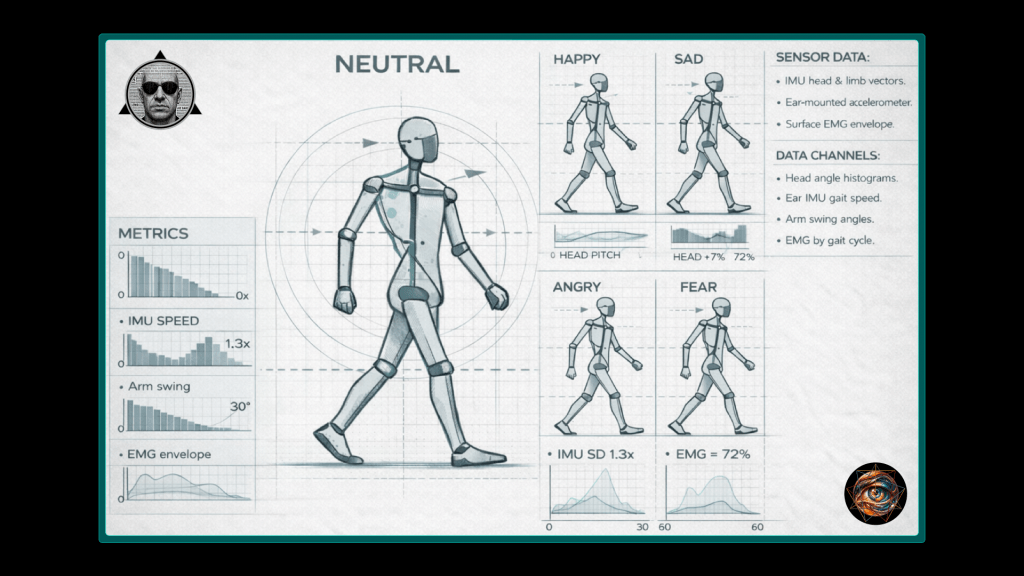

The system that claims to read your emotions operates through a logic of decomposition. It does not perceive a face; it perceives sixty-eight landmarks arranged in spatial relationship, each measured against a template derived from training data. It does not hear a voice; it hears pitch contours, spectral coefficients, energy distributions parsed into feature vectors. Your walk becomes fifteen skeletal nodes tracked through three-dimensional space, joint angles and limb velocities computed frame by frame. The human subject enters the system whole and emerges as a collection of signals, each processed through classifiers trained to serve from a menu of non-neutral categorical labels:

- Happy

- Sad

- Angry

- Afraid

- Disgusted

- Surprised

The choice of what to measure determines what can be seen, and what can be seen determines what the system believes it knows. A face reduced to landmarks loses the context that gives expression meaning. A voice reduced to acoustic properties loses the words that might contradict the tone. A walk reduced to skeletal geometry loses the history that explains why someone moves as they do. The system’s confidence is inversely proportional to its understanding; it knows everything about the signal and nothing about the person.

Your Face Is A Minefield

Facial emotion recognition relies on the Facial Action Coding System, a 527-page taxonomic framework that decomposes expression into Action Units corresponding to specific muscle contractions. AU12 denotes the pulling of lip corners by the zygomatic major. AU6 denotes the raising of cheeks by the orbicularis oculi. When both activate together, the system classifies a Duchenne smile—the configuration associated with genuine happiness, the smile that reaches the eyes. When AU12 activates alone, the system classifies a Pan-Am smile: the flight attendant’s courtesy held a beat too long, the politician’s grin maintained through the handshake and the photo and the next handshake, pleasant and hollow and legible as performance to any human observer. It aspires to that legibility.

The machine wants to know when you are faking.

In controlled laboratory settings, this aspiration achieves apparent success. State-of-the-art models report 98.6% accuracy classifying happiness and neutral states when subjects produce posed expressions under standardized lighting with frontal camera angles. The numbers inspire confidence and are largely meaningless. Spontaneous expressions in naturalistic environments reduce performance to barely above chance.

The posed-spontaneous gap reflects a fundamental misalignment between training conditions and deployment contexts. Laboratory datasets feature actors deliberately producing exaggerated expressions; real-world faces are subtler, faster, obscured by hands or hair, captured from oblique angles under variable illumination, and frequently mixed—simultaneous joy and anxiety, fear and hope, the emotional chords that single-note classification cannot render. Training on theatrical expressions produces systems that detect theater. The technology reads masks with precision and mistakes them for faces.

The bias compounds across demographics. The AffectNet model, trained on four hundred thousand facial images, produces divergent predictions for identical Action Unit configurations when skin tone varies. African-descent faces receive systematically different intensity scores than European-descent faces displaying the same muscle contractions. The disparity cannot be dismissed as training data imbalance; even racially balanced datasets fail to eliminate discrimination. The measurement framework itself encodes cultural assumptions about emotional display, treating one population’s baseline morphology as deviation from an unmarked norm. The detainee in the metal chair is not merely surveilled; he is surveilled by a system calibrated to someone else’s face.

Your Voice Is A Snitch

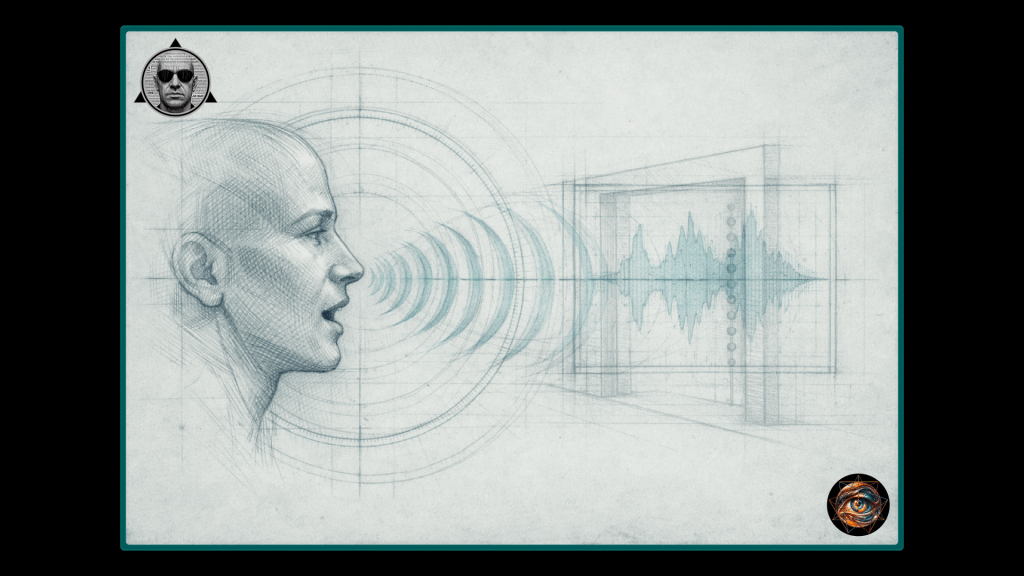

Voice emotion recognition treats speech as testimony extracted under duress. The system synthesizes three signal streams: lexical content (the words spoken), visual cues (facial expressions accompanying speech), and acoustic properties (pitch, tone, rhythm, energy, Mel Frequency Cepstral Coefficients). Each stream offers potential betrayal. The voice that trembles contradicts the words that insist on calm. The pitch that rises undermines the sentiment that claims confidence. Your own larynx becomes the witness against you, offering evidence you never consented to provide.

In practice, the acoustic channel dominates commercial deployment, since capturing voice requires only a microphone while capturing face requires a camera with line-of-sight. Current systems achieve approximately 70% accuracy classifying anger, fear, happiness, and sadness from audio alone. Multimodal approaches combining audio with text transcripts improve performance by twenty percentage points. These figures qualify as “moderate” by clinical standards—useful for population-level analytics, insufficient for high-stakes individual assessment without human oversight. The qualifier is crucial and routinely ignored. Contact centers deploy real-time sentiment detection to trigger supervisor alerts when customers exhibit vocal distress. Workplace monitoring systems analyze email tone and meeting participation to infer employee emotional states. The technology conflates correlation with causation, treating statistical association as confession.

The voice carries cultural freight the algorithm cannot unpack. A rising intonation that signals uncertainty in some dialects signals emphasis in others. A flat affect that reads as depression in neurotypical populations may simply be characteristic of autistic speech patterns. A loud, rapid delivery that registers as anger may be the normal conversational register of communities the training data underrepresented. The system imposes a single emotional grammar on a polyglot world and calls the resulting mistranslations objective measurement. The informant testifies in a language the court does not speak; the court convicts anyway.

Body Language

Gait recognition represents the frontier of behavioral biometrics, not yet commercially viable but advancing rapidly. The premise is seductive: unlike the face, which can be trained to neutrality, and unlike the voice, which can be modulated with practice, the walk operates below conscious control. Sadness manifests in hunched posture and folded shoulders. Anger manifests in forward lean, stretched neck, hurried pace. Happiness opens the shoulders and amplifies body swing. Fear contracts and quickens. The body, it seems, cannot help but confess.

The premise is itself a lie. Bodies lie constantly, and with practice they lie fluently. The applicant who adopts a confident stride for the interview despite interior terror has learned to speak a somatic dialect that contradicts her autonomic state. The mourner who walks briskly to the funeral to avoid collapsing in the parking lot performs composure as surely as any actor. The chronic pain patient whose gait conceals agony has spent years perfecting a syntax of normalcy. The system mistakes statistical correlation for somatic truth; it sees the average walker and infers a universal grammar, unable to account for individuals whose bodies have learned a different language.

Yet the technology proceeds regardless. Multi-Scale Adaptive Graph Convolution Networks construct skeletal graphs from walking patterns, using coarse-grained analysis for overall gait state and fine-grained graphs for localized joint movements. The algorithm recognizes that different emotions exhibit distinct temporal signatures: anger manifests in shoulder and arm amplitude during later frames of the gait cycle, while sadness appears in torso forward lean and spinal curvature during earlier frames. The skeleton becomes a text the machine has learned to parse, even when the text is fiction.

The decomposition is precise enough to visualize. Sixteen nodes map the body from root to feet: spine, neck, head, shoulders, elbows, hands, hips, knees, feet. Each node’s position is tracked through space as the subject walks. Probability distributions capture the statistical signature of each emotional state—head movement angle, arm swing arc, muscle activation envelope. Happy specimens exhibit open shoulders and pronounced swing. Sad specimens hunch and fold. Angry specimens lean forward with stretched necks. The language of naturalist field guides applies without irony: the researcher catalogs emotional fauna by their locomotive phenotype, specimens collected and classified, their interiority reduced to observable trait.

If walking itself becomes emotionally legible, what refuge remains?

The face can be trained, the voice modulated, but the body moves before consciousness intervenes. Gait recognition targets the autonomic, the habitual, the deeply embodied. It reads the nervous system directly, bypassing the performative layer where defense traditionally operates. The confession it extracts is one you never knew you were making.

The Flesh Made Data

Physiological monitoring bypasses performance entirely—or so its proponents claim. Wearable sensors measure heart rate variability, skin conductance, blood volume pulse, electrodermal activity, skin temperature. These signals operate through autonomic pathways less amenable to voluntary control than facial muscles or vocal cords. When you are afraid, your heart rate accelerates and your skin conductance spikes regardless of what your face does. The body becomes a polygraph worn on the wrist, and you have voluntarily strapped it there.

Consider what this means. The polygraph was designed as an instrument of interrogation, deployed by authorities against subjects who understood themselves to be under suspicion. The smartwatch inverts the architecture: the subject becomes her own interrogator, wearing the device that measures her constantly, uploading the data to servers she does not control, granting access to parties she cannot identify. The technology migrated from laboratory to wrist without passing through the regulatory scrutiny that governs medical devices, because it markets itself as wellness rather than surveillance. The framing is a lie the device tells about itself.

Consumer-grade devices already achieve troubling accuracy. The Samsung Galaxy Watch, analyzing photoplethysmography and galvanic skin response, attains 84% accuracy classifying emotional valence and 92% accuracy classifying arousal in controlled settings. Ensemble deep learning architectures processing accelerometer, blood volume pulse, and electrodermal signals reach 90% accuracy; personalized models trained on individual baselines reach 95%. The advantage over facial and vocal analysis is genuine: physiological signals resist conscious manipulation. The disadvantage is equally genuine: they resist conscious interpretation. Identical heart rate increases may signal fear, excitement, physical exertion, caffeine consumption, or the onset of arrhythmia. Elevated skin conductance may indicate anxiety or simply a warm room. The system identifies arousal reliably but struggles with valence; it knows you are activated without knowing whether you are activated toward joy or dread.

This ambiguity becomes dangerous when the technology informs decisions—when the elevated heart rate of a job applicant is read as deception rather than nervousness, when the skin conductance spike of a patient is read as agitation rather than pain. The deeper danger is normalization. Wearable sensors require contact, limiting covert application today. Tomorrow’s sensors may not. Thermal imaging detects blood flow beneath the skin from a distance. Radar-based systems infer heart rate through clothing. Ambient physiological surveillance, invisible and continuous, represents the logical terminus of a trajectory the smartwatch has already begun.

The flesh made data, the data made decision, the decision made without appeal.

The Synthesis That Multiplies Error

Modern systems increasingly deploy multimodal fusion, combining face, voice, gait, and physiology to triangulate emotional inference. The theory is that cross-validation reduces false positives; if the face says happy but the voice says anxious and the heart rate says aroused, the system can weigh conflicting signals rather than relying on any single channel. The theory is elegant. The practice is not.

Each modality carries its own biases. Facial recognition’s demographic disparities do not cancel voice recognition’s cultural assumptions; they compound. Gait recognition’s confusion of performance with truth does not correct physiological monitoring’s arousal-valence ambiguity; it adds another layer of error. A system that averages three wrong answers does not produce a right one. The fusion creates false confidence precisely because it appears robust. Decision-makers who might question a single-channel classification defer to the apparent triangulation, unaware that the agreement reflects shared flaws in training data rather than independent confirmation. The witnesses corroborate each other because they learned their stories from the same source.

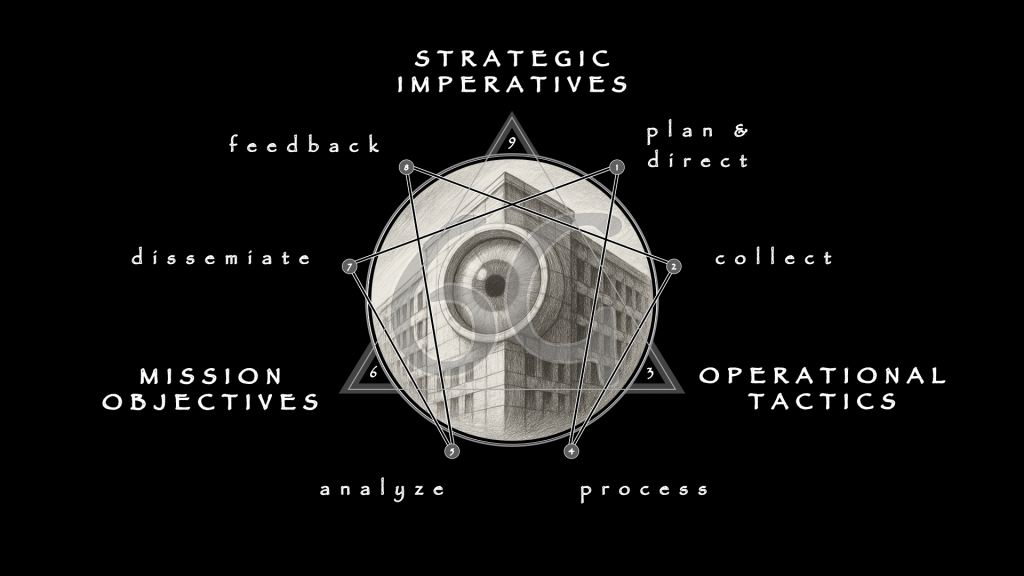

Emotion recognition is intelligence work, the target merely shifted from foreign adversary to domestic population. The apparatus developed to read enemies abroad now reads employees, customers, students, patients, suspects. The same logic of extraction applies, the same confidence in signals over subjects, the same institutional hunger for legibility regardless of accuracy. The project is theological in its ambition: the machine aspires to omniscience, to read the soul through its somatic traces. The aspiration outpaces the engineering. The god the system wants to become is a god it cannot be.

Understanding how the machine sees is the first requirement for evading its gaze. The system’s decomposition is also its vulnerability. A face that produces unexpected Action Unit combinations, a voice that violates acoustic-emotional correlations, a gait that refuses categorical signature, a physiology that masks arousal or mimics calm—each exploits the irreducible distance between what the machine measures and what the person experiences. The system builds blueprints and mistakes them for buildings. Every building contains rooms the architect never drew.

Leave a comment