The Discipline of Disappearance

The practitioner enters the monitored space having already begun. He adjusted his gait in the parking garage, smoothing the stride into neutral cadence before the first camera acquired her. His face settled into pleasant vacancy during the elevator ride, the expression he has rehearsed until it requires no effort. His voice, when he speaks, carries the measured warmth of professional courtesy without the pitch variations that would betray enthusiasm or anxiety. He is not suppressing emotion, but selecting what to display from a repertoire he has spent months developing. The surveillance system captures his image, extracts his features, runs its classifications. It finds nothing actionable. He has not disappeared, but become unmemorable, which in the attention economy of algorithmic surveillance amounts to the same thing.

This is the discipline of disappearance: not the absence of presence but the curation of it, the deliberate management of what the sensors can see. The goal is not to feel nothing, nor to hollow out the inner life until no signal remains for extraction. Rather, it is to preserve authentic emotional experience by controlling when, where, and to whom that experience becomes visible.

Privacy is not the absence of connection, but the exercise of choice.

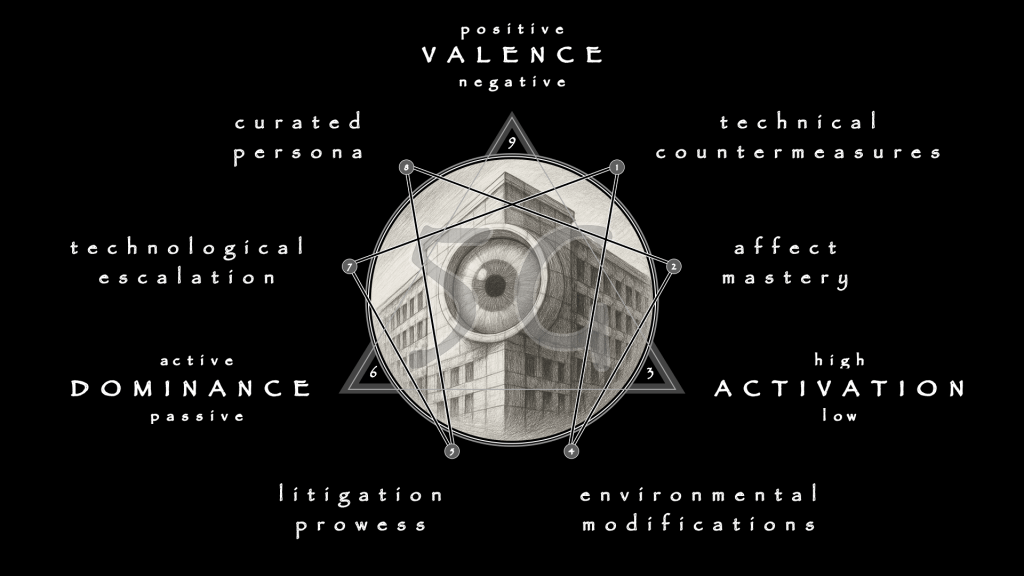

Technical Countermeasures

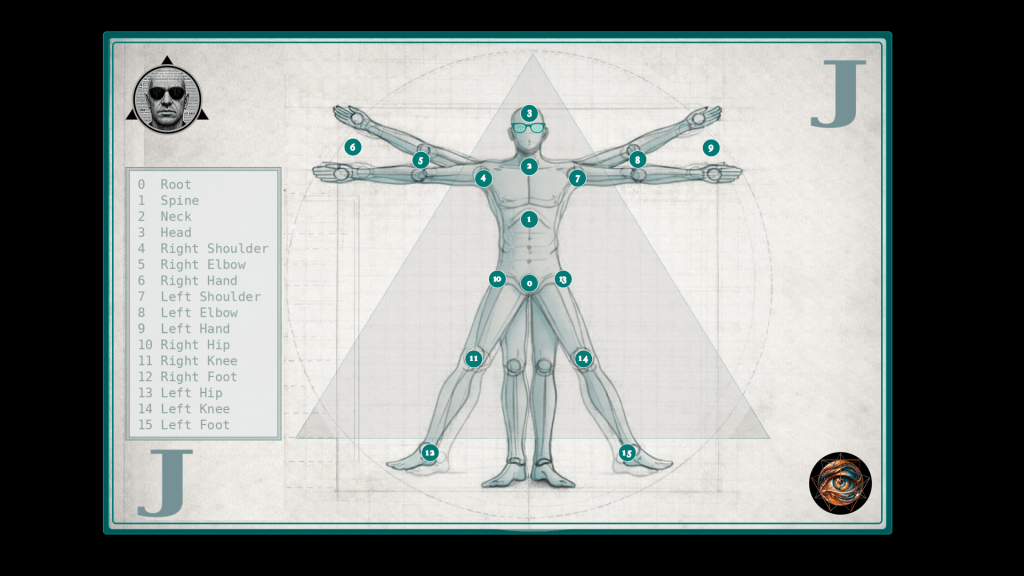

The machine sees through pattern recognition, and it can be defeated by pattern disruption. Adversarial machine learning offers the most technically sophisticated evasion, using carefully designed perturbations to fool classification systems while remaining imperceptible to human observers. Generative Adversarial Networks produce patches that, applied to eyeglass frames, achieve over 80% success in causing facial recognition systems to fail. The perturbations exploit the gap between human and machine perception. The glasses look merely fashionable to colleagues, but to the algorithm they constitute noise that scrambles the feature extraction pipeline.

The wearer becomes a signal the system cannot resolve.

The technique extends beyond accessories. Makeup patterns informed by adversarial research can disrupt facial landmark detection. Hairstyles that partially occlude the face eliminate data the system requires. The principle is consistent: identify what the machine needs to see, then prevent clear seeing. The face that refuses to resolve into the sixty-eight landmarks the system expects becomes illegible without becoming conspicuous. The goal is not to look like you are hiding, but to be hidden while looking like everyone else.

Voice emotion recognition requires different countermeasures. The system analyzes pitch contours, spectral coefficients, rhythm, energy—acoustic properties that correlate with emotional states in training data. Voice modulators can alter these properties in real time, confusing algorithms that rely on prosodic patterns. Monotone delivery strips the variation that classification requires. Deliberate manipulation of speech rhythm—pausing where the system expects flow, accelerating where it expects pause—violates the acoustic-emotional correlations the model has learned.

The voice becomes static where the system expected signal.

Gait recognition presents the most challenging technical problem because the body moves before consciousness intervenes. Yet mechanical intervention remains possible. Different footwear alters stride length and ground contact patterns. Subtle weights redistribute balance. Conscious modification of arm swing, head position, and walking pace can disrupt the skeletal signature the algorithm seeks. Practitioners who develop a neutral gait—neither the expansive stride of happiness nor the contracted shuffle of sadness—create a baseline that correlates with no categorical emotion. The body can learn to lie as fluently as the face. Although it requires more deliberate practice, such fluency is attainable.

Physiological signals present the hardest target. Heart rate, skin conductance, and electrodermal activity operate through autonomic pathways resistant to voluntary control. Yet biofeedback training can develop limited mastery. Meditation practices that reduce baseline arousal make deviations less pronounced. Controlled breathing modulates heart rate variability. Practitioners who arrive at the monitored encounter having already lowered their physiological baseline present less variation for the system to interpret. They cannot eliminate the signals, but they can compress the dynamic range until the algorithm’s classifications become uncertain.

The body’s testimony becomes ambiguous, and ambiguity is acquittal.

Affect Mastery

Technical countermeasures address the sensors; psychological countermeasures address the source. The Stoic philosophical tradition offers a framework for voluntary emotional regulation that predates algorithmic surveillance by two millennia but proves remarkably applicable to it. The Stoics distinguished between the initial involuntary response to stimulus—the flinch, the flush, the spike of arousal—and the subsequent cognitive evaluation that transforms sensation into sustained emotion. Although the first cannot be eliminated, the second can be governed.

The dichotomy of control grounds the practice. External events lie outside your control. Your responses to those events lie within it.

The surveillance camera exists. Your facial expression in response to its presence, however, is yours to determine. The algorithm classifies. Your internal state need not match its classification. This cognitive reframing does not suppress emotion but redirects it, shifting energy from reactive display to deliberate choice.

The practitioner experiences frustration, fear, anger—and then chooses whether and how to express them, maintaining a low external locus of identity that surveillance cannot destabilize.

Cognitive Behavioral Therapy translates Stoic principles into clinical practice with documented efficacy. The techniques identify cognitive distortions—catastrophizing, overgeneralization, emotional reasoning—and replace them with rational reappraisal. Applied to surveillance, the employee who notices the camera and thinks “They’re watching everything; I’ll be fired for any mistake” can reframe to “The system generates data; data requires interpretation; interpretation is fallible; my task is to perform my job, not to perform for the algorithm.” Reframing reduces the anxiety that produces the facial expressions that confirm the system’s suspicion.

Cognitive intervention interrupts the feedback loop before it completes.

Classical theatrical training provides complementary techniques for muscular control. The face contains forty-three muscles capable of producing over ten thousand distinct configurations. Most people control only a fraction consciously. Actors train to expand that control through systematic exercise:

- The lion-mouse stretch that activates all facial muscles through maximum expansion and contraction

- Self-massage that loosens musculature for fluid transition

- Exaggerated emotion practice that builds muscle memory

- Cheek exercises that strengthen expressive infrastructure

- Brow lifts that develop forehead control

- Eye exercises that practice sustained, purposeful gazing

Thirty minutes of daily practice, maintained for months, transforms the face from involuntary billboard into instrument under conscious direction.

The integration matters more than any component. Stoic reappraisal prevents involuntary responses from escalating into visible display. Stage craft develops muscular capacity to maintain chosen expressions regardless of internal state. Together, they constitute affect mastery: the ability to determine what your face, voice, and body communicate rather than having that communication determined for you. The camera sees what you choose to show, and the algorithm classifies whatever signal you decide to present.

Environmental Modifications

Whereas technical and psychological countermeasures address the individual, environmental modifications address the context. The first principle is reconnaissance: knowing where cameras exist, where microphones capture, where sensors operate. The office that scores video calls does not disclose the algorithm’s criteria. The shopping mall does not advertise which aisles are monitored. Yet inference is possible. Cameras cluster at entrances, checkout zones, high-value merchandise areas. Meeting platforms with “engagement analytics” likely capture expression data. Practitioners who research their employer’s technology vendors, who observe camera placements during shopping, and who map the surveillance infrastructure of their daily transit, convert ambient threat into specific knowledge.

Knowledge enables tactical navigation.

The conversation requiring emotional authenticity occurs outside camera range. The meeting where genuine reaction might prove costly takes place with video disabled. The transit through monitored space adopts flat affect, and only the arrival at unmonitored destination permits relaxation. The practice resembles movement through hostile territory:

- Awareness of sightlines

- Avoidance of chokepoints

- Knowledge of which routes offer cover

The security contractor’s situational awareness becomes the civilian’s daily practice, because civilian space has become a theater of operations where the adversary’s weapons are cameras rather than rifles.

Crowd dynamics offer natural camouflage. In dense gatherings, individual expressions become harder to isolate, because processing resources are finite. The system monitoring a hundred faces simultaneously allocates less computational attention to each than the system monitoring only one.

The practitioner who moves through crowded space benefits from the limits of mass surveillance—one signal among many, their own emotional signature diluted by surrounding noise.

Strategic choices extend beyond momentary navigation. The worker who can choose employment in EU jurisdictions gains legal protection unavailable elsewhere. The consumer who patronizes retailers without emotion AI denies data to systems that would otherwise capture it. The citizen who supports privacy-preserving municipal policies contributes to an environment where surveillance becomes harder to deploy. Individual choices aggregate into collective conditions. The environment is not fixed terrain but constructed landscape, which can be influenced by those who understand the stakes.

Litigation as Countermeasure

Legal challenge constitutes an active countermeasure distinct from the passive shelter that regulation provides. Where law prohibits emotion AI, the subject benefits without acting; protection applies automatically. Litigation requires engagement: identifying violations, documenting harms, pursuing remedies through adversarial process. The distinction matters because litigation can create protection where regulation has not, forcing accountability through private enforcement.

BIPA exemplifies the mechanism. The employee whose biometric data is collected without consent can sue, and statutory damages—one to five thousand dollars per violation—aggregate through class action into liability that disciplines corporate behavior. The plaintiff need not prove actual harm; the violation itself creates the cause of action. Statutory damages and class aggregation make plaintiffs’ attorneys economically viable, creating a private enforcement bar that supplements regulatory capacity.

The defendant in criminal proceedings has different tools. Motion to exclude emotion AI evidence forces the prosecution to defend methodology under Daubert or Frye standards. The challenge requires investment—expert witnesses, legal research, hearing time—but creates benefits beyond the individual case. Successful exclusion establishes precedent; even unsuccessful challenge educates judges and creates appellate record.

Litigation generates discovery.

The organization defending against BIPA claims must produce documentation: what data was collected, how it was processed, what decisions it informed. This information, often otherwise unavailable, illuminates practices that operate in opacity. Discovery in one case becomes evidence in another; the litigation ecosystem generates understanding that strengthens future challenges. The plaintiff who pursues remedy contributes to a commons that benefits all subjects of emotional surveillance.

The Curated Persona

The comprehensive countermeasure integrates all preceding elements into coherent practice: the curated persona, a consistent emotional presentation deployed in surveilled contexts while authentic expression is reserved for private spaces. The concept acknowledges that total opacity is neither achievable nor desirable. The goal is not to feel nothing but to control visibility, maintaining public consistency that reveals nothing exploitable while preserving private contexts where the mask can be removed.

Philosophical grounding provides the foundation.

Stoic commitment to equanimity—remaining composed regardless of external circumstance—offers both rationale and method. The practitioner does not feign lack of emotion, and instead presents the same measured composure whether facing praise, criticism, or provocation. Consistency itself becomes the message. He does not react, does not leak, does not provide the variation that surveillance requires for meaningful classification. The flat line is not absence of life but refusal to perform on demand.

Behavioral consistency reinforces the grounding. Routine responses to routine stimuli create a baseline that reveals nothing because it correlates with everything. The discipline develops standard reactions: the slight smile that acknowledges without engaging, the neutral attentiveness that satisfies social expectation without expressing interior state, the measured delivery that conveys competence without betraying feeling. These defaults are not suppression but selection—choosing from the available repertoire rather than displaying whatever arises.

Environmental adaptation preserves authenticity. Home, trusted relationships, designated private spaces become sanctuaries where genuine expression occurs without surveillance. The persona is armor worn in hostile territory, not identity transformation. The practitioner who maintains clear boundaries between public performance and private authenticity avoids the psychological corrosion that total performance produces. She knows which face is mask and which is her own; the distinction protects both privacy and sanity.

Practice maintains the discipline. Daily exercises rehearse the persona under simulated stress, just as meditation cultivates awareness of the distinction between feeling and display. The curated persona is a skillset, not a bag of tricks, and like any discipline it degrades without maintenance. Those who neglect training discover, under pressure, that the mask has slipped—and learn in that moment why the discipline exists.

The Cost and the Prize

Every countermeasure carries cost. Technical interventions require acquisition and maintenance, while conspicuous evasion may attract the attention it was meant to deflect. Psychological training demands not only sustained effort over months before capacity develops, but also a teacher. Environmental navigation constrains movement and forecloses opportunities available to those who accept surveillance as the price of access. Litigation consumes time, money, and emotional reserves, with outcomes uncertain and retaliation possible. The curated persona risks fragmentation if the boundary between mask and self erodes.

These costs are real and cannot be minimized.

The discipline of disappearance is a discipline, with all the effort that the term implies. The practitioner must commit to ongoing vigilance, to ongoing expenditure of resources that might otherwise flow elsewhere. The question is not whether the costs are worth bearing in abstraction but whether they are worth bearing compared to the alternative: compulsory emotional transparency, the interior extracted and processed and acted upon by institutions whose interests diverge from your own.

The prize is sanctuary—not perfect safety, but the preservation of space where authentic experience remains possible, where the self that feels and desires is not fully visible to systems that would optimize or discipline or control it. Orwell’s Winston Smith identified “the few cubic centimeters inside your skull” as the last refuge of freedom. The discipline of disappearance defends that refuge against instruments Orwell could not have imagined but whose logic he understood completely.

The Red Queen runs because she believes the race has no exit.

The discipline reveals exits she has not tried: technical interventions that blind the sensors, psychological training that governs the source, environmental navigation that avoids the gaze, litigation that constrains the watchers, the curated persona that determines what they see when watching succeeds. None of these exits leads to a world without surveillance. All of them lead to a self that surveillance cannot fully capture—a self that remains, despite everything, one’s own.

The Price of Invisibility

The discipline works. The practitioner moves through monitored space without surrendering the inner life to algorithmic extraction. The cameras capture an image; the microphones record a voice; the sensors track movement. None of them capture the individual who learns to run in directions the Red Queen never imagined, reaching ground the system cannot follow.

What is the cost to stand on that hallowed ground?

The Labor of Refusal

Emotional countersurveillance is itself emotional labor. The phrase typically describes the work of producing feelings—or the appearance of feelings—that a role requires. The nurse who maintains compassionate presence through the twelfth hour of a shift, the debt collector who performs friendly menace on demand, the content moderator who absorbs atrocity with stable affect so the platform remains brand-safe. Each performs work that exhausts, that erodes the boundary between authentic and performed emotion until the workers no longer know which feelings are their own. The surveillance apparatus discovered that this labor could be extracted at scale, but the extraction does not eliminate the labor. It shifts the burden to those who resist.

The practitioner who maintains a curated persona must perform emotional labor continuously.

The neutral expression held through the meeting, the measured tone sustained through the difficult call, the flat affect adopted during transit through monitored space—each requires effort, attention, energy diverted from other purposes. The labor is invisible to observers, which is precisely the point. Visible effort would defeat the purpose. Yet invisibility does not mean absence. The practitioner expends resources that the unsurveilled subject conserves, and the expenditure accumulates across hours, days, even years of practice.

The technology was designed to read emotional labor—to detect when the service worker’s smile is performed rather than felt, to identify when the employee’s engagement is manufactured rather than genuine. The countermeasure is more emotional labor, performed more skillfully, less detectable precisely because it is more total. The practitioner defeats the system by becoming better at the performance the system was built to penetrate. He wins the arms race by escalating it, investing more in emotional management than the technology can cost-effectively analyze.

The victory is real, and so is the exhaustion.

The Machine Within

The goal of emotional countersurveillance is to preserve authentic emotional experience, to maintain a space where the inner life remains unmeasured and unmapped. Yet the practice requires treating one’s own emotions as objects to be managed, signals to be controlled, data to be curated. The practitioner develops the same instrumental relationship to his affective life that the surveillance system seeks to impose. He becomes his own monitor, his own analyst, his own algorithm—parsing her expressions for leakage, scoring his voice for tells, evaluating his gait for emotional signature.

The watcher he carries is internal, and it never blinks.

The Stoic response to this understanding is that self-observation constitutes wisdom, not alienation. The practitioner who can notice anxiety arising without being compelled to express it, who can feel anger without being hijacked by it, who can experience fear while choosing his response—this practitioner has developed a capacity that serves far beyond the surveillance context. He wants this to be true. He needs it to be true, because the alternative is that the discipline he has undertaken corrodes the very thing it was meant to protect. The examined life, Socrates promised, is worth living. The examining life, the life that monitors itself continuously, must be worth living too.

Yet something escapes the Stoic account. The sage who cultivates equanimity does so for its own sake, seeking a life well-lived according to reason. The practitioner who cultivates the same equanimity as countersurveillance measure does so in response to external threat, his inner development shaped by the adversary he resists. The surveillance system has not captured his emotions, but it has captured his attention, his practice, his daily discipline. He organizes his inner life around the threat of extraction even when no extraction occurs. The system shapes his whether or not it reads him. This shaping is the system’s victory, achieved without firing a shot, won through the mere credible threat of observation.

The autoimmune response attacks the self it meant to defend.

The Red Queen runs not because running gets her somewhere but because stopping is unthinkable. The ground moves beneath her; she must move to compensate. In time, the movement becomes identity. She no longer remembers what it was to stand still, no longer imagines that stillness is possible. Her running has become a kind of standing, a new equilibrium that feels like rest because she has forgotten what rest felt like. The practitioner of emotional countersurveillance risks the same transformation: a self so habituated to management that unmanaged experience becomes inaccessible, an inner life so continuously curated that the curator forgets she is performing.

The Sanctuary That Isn’t

The discipline assumes a division between surveilled and unsurveilled space, between public contexts where the persona is deployed and private contexts where it can be dropped. Home, trusted relationships, designated sanctuaries—these are the territories where authentic expression remains possible, where the mask comes off and the face beneath it can breathe. The architecture of the curated persona depends on this division. Without sanctuary, the performance becomes total; with it, the performance remains bounded, sustainable.

The assumption is increasingly false.

Smart home devices monitor ambient audio for commercial keywords and, potentially, emotional content. Wearable sensors track physiological states continuously, uploading data to servers beyond the wearer’s control. Social media platforms analyze text and image for sentiment, building affective profiles from content users believed was shared only with friends. The private sphere has been colonized by the same surveillance infrastructure that operates in public, and the colonization proceeds regardless of legal protection because the infrastructure is invited in. The user who installs the smart speaker, who wears the fitness tracker, who posts to the platform, has opened the sanctuary to the very systems the sanctuary was meant to exclude. He has placed the idol in the holy of holies and called it convenience.

The practitioner can refuse these invitations, can maintain device-free zones and platform-free relationships, and can construct sanctuary through technological abstinence. The refusal, though, comes at the cost of social disconnection from those who have accepted the infrastructure, of practical inconvenience in systems designed to assume participation, of the constant labor of maintaining boundaries that others do not recognize. The sanctuary that remains is smaller than it was, harder to reach, and more expensive to maintain.

It exists, but it exists as achievement rather than default, as territory reconquered rather than territory never lost.

And even within the sanctuary, the discipline persists. The practitioner who has trained for months to maintain a curated persona does not simply drop the training when he enters private space. The monitoring is internalized. The evaluation continues, the constant awareness of how he appears persists even when no one is watching. The actor who has played a role for years finds the role bleeding into offstage life. The practitioner who has managed emotional display across every public context may discover that management has become automatic, that the authentic expression the sanctuary was meant to enable no longer comes easily.

What the Machine Cannot See

Anyone who has followed this far may feel the weight accumulating: the labor, the internalized watcher, the shrinking sanctuary, the face that learns the mask’s shape. The weight is real. The costs are real. Honest counsel requires acknowledging them without minimization.

The authentic emotional life that the discipline aims seeks to protect is, in significant measure, already protected by the system’s own incapacity. The fear that every interior state is legible, that the algorithm reads the soul through its somatic traces, that nothing can be hidden—this fear overstates what the technology achieves. The machine aspires to omniscience, but attains only pattern matching. Moreover, the patterns it matches are only those it was trained on, which are not the patterns of genuine human interiority. The theological ambition outruns the engineering. The god that the system wants to be is a god that it cannot become.

The practitioner’s labor, then, is not infinite. He need not achieve perfect opacity, need not maintain the curated persona without lapse, need not police every micro-expression for potential leakage. He needs only to avoid the categorical errors the system is calibrated to detect, to stay outside the classification boundaries that trigger intervention. The threshold for success is lower than the discipline’s rigor suggests.

The discipline is rigorous because the stakes feel total, but the stakes are bounded by the adversary’s actual capabilities, not its aspirational claims.

The Ground That Remains

The inner life is not a territory that can be fully mapped.

The surveillance apparatus operates on a theory of emotion that treats internal states as signals awaiting extraction, patterns that sufficiently sophisticated sensors will eventually decode. The theory assumes that emotion is substrate—that it exists in the face, the voice, the gait, the heartbeat—and that reading the substrate is reading the emotion. The assumption is philosophically contested and empirically unsupported. Expressions correlate with emotions; they do not constitute them. The algorithm produces a map and mistakes it for the territory, but the territory has depths no cartography can render, and those depths are where you live.

The practitioner who understands this gains something beyond technique. He understands that the system’s power is partly illusory, that its confidence exceeds its competence, that the threat it poses is real but bounded. He can calibrate his response to the actual threat rather than the imagined one. He can invest in countermeasures where they matter—contexts of genuine institutional power, adversaries with genuine capacity for harm—and relax where the threat is theatrical, where surveillance performs its function through the belief it induces rather than the reading it achieves.

The Red Queen runs because she cannot imagine stopping. The practitioner who has followed this discipline achieves something else: not the absence of surveillance, which is not available, but the accurate assessment of it, which is. The system watches, but it does not see. It captures, but it does not comprehend. It classifies, and it does not know. The inner life that the practitioner sought to protect was never fully at risk, because the interiority the system claims to read is not interiority at all but the outward traces of interiority, and traces are not the thing itself.

The ground the cameras cannot see is not a place to be reached through elaborate evasion.

It is the place where one has always stood, the few cubic centimeters inside the skull that no sensor penetrates, the self that experiences rather than the signals the self emits. The discipline of disappearance teaches how to manage those signals. The teaching is valuable, and the practice is worth maintaining.

Yet the deepest sanctuary requires a different discipline to reach, if any, for it is already here, beyond the algorithm’s grasp.

The machine reads surfaces. The self is not a surface. The practitioner who grasps this can carry the discipline lightly, can maintain the curated persona without being consumed by it, can navigate the surveilled world without mistaking navigation for the whole of life. The Red Queen runs forever because she believes the race is all there is. The practitioner knows that the race, however real, takes place on a track that circles a center the runners never reach.

That center holds. It is not civilization teetering at the edge of collapse. It is something smaller and more durable, the irreducible first-person fact of experience that no third-person observation can capture. The discipline exists not to create this sanctuary but to remember it, not to build walls around it but to recognize that no walls were ever needed. The interior was always interior. The self was always more than its signals. The ground was never lost, only forgotten, and the remembering is the final practice, the one that makes all the others sustainable.

You were always already free: discipline teaches you to act like it.

Leave a comment