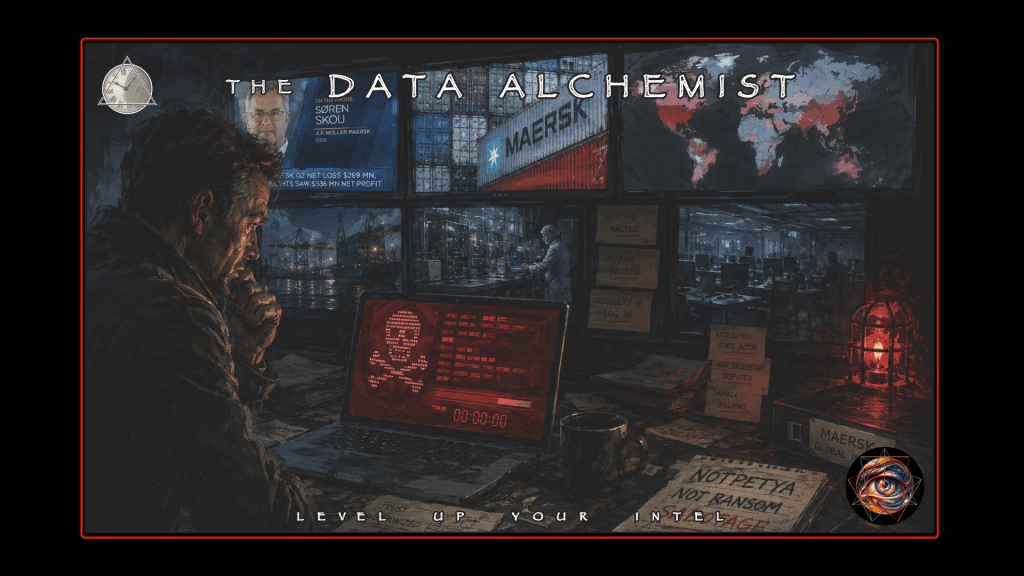

June 27, 2017—Screens worldwide display a ransom note, promising a transaction. Instead the perpetrators deliver demolition. The malware called NotPetya rode the shape of expectation, exploiting trust relationships that victims could not uninstall because institutional compliance required them. Within hours, global shipping would grind to a halt, pharmaceutical manufacturing would freeze, and Merck would find itself borrowing vaccine doses from the U.S. strategic national stockpile to fulfill orders. Organizations discovered all-too late that their disaster recovery plans had been built assuming the responders could still log in.

The incident compressed three durable truths into one fast-moving event:

- Trust, in the form of mandatory networks, can be weaponized

- Recovery is an identity problem before it is a storage problem

- A ransom note may be a diversionary prelude to sabotage

NotPetya did more than exploit trust. The event exploited the time it takes defenders to understand that trust has already failed. Governments would later attribute the attack to a military intelligence unit. Insurers would spend years arguing whether state sabotage triggers war exclusions. The total estimated damage would exceed $10B USD.

For the self-taught investigator, Tony Scott’s 2006 detective thriller, Déjà Vu, provides an instructive window into aoristic, or time-based, forensic analysis. In the film, an apparently random federal agent from the ATF named Doug Carlin gains access to a surveillance system that shows him the past in real time, four days delayed. He cannot rewind. He cannot pause. The technology shows him a woman, Claire Kuchever, as she moves through her final hours.

Knowing she will die, he is unable to look away or skip ahead.

The constraint is the source of all the narrative tension. Agent Carlin must notice everything the first time, because the footage will not wait for him to catch up. As he observes, he falls in love with a victim who is already dead. The film contemplates what it means to investigate a catastrophe you cannot prevent.

Three subplots converge toward the climax:

- Agent Carlin’s impossible attachment to the victim

- A fraught negotiation with the surveillance team that controls his access

- Reverse-engineering of a mysterious bomber’s meticulous tempo

The self-taught OSINT analyst occupies an inverted version of Carlin’s position.

The catastrophe has already happened. Traces are frozen in corporate filings, government statements, and technical reports. They always are. Any analyst can rewind and pause. Yet, the footage is intrinsically incomplete, shot from angles chosen by others, and edited by institutional interests before release.

The question is never whether an analyst can change what happened, but whether he can understand the pattern of one event well enough to recognize it in the next one before it detonates. NotPetya is but one explosion whose shrapnel pattern this instructive case study exhumes. Assuming the theater has already burned, the task is to determine whether the fire was part of the act.

The Morning the Screens Went Dark

On a normal cybersecurity Tuesday, ransomware has a rhythm. Someone opens the wrong file unbeknownst, unleashing something malicious. A ransom note appears, and a negotiation begins. The cycle is familiar enough to generate playbooks, insurance products, and a cottage industry of negotiation consultants.

Unpleasant as the message on the screen may be, it is at least legible: pay this amount for the key to resume operations.

On June 27, 2017, many organizations encountered a different rhythm altogether. The note appeared, the clock started, and the usual instinct to pay and recover kicked in. Yet there was no one on the other end. While the message looked like garden-variety extortion, the outcome behaved like demolition. In Ukraine, organizations reported disruptions across banks, infrastructure, and public services. Elsewhere, administrators saw the same symptoms as machines rebooted into ransom screens and the ordinary theater of extortion played out on monitors worldwide.

Even the malware’s name was unstable in those first hours, cycling through Petya, NotPetya, Nyetya, as language lagged behind the thing it tried to describe.

The Danish shipping giant Maersk publicly confirmed, the same day, that it had been hit as part of a global cyber attack with IT systems down across multiple sites. The statement was short, the kind of curt corporate acknowledgment designed to be precise without being expansive. FedEx published an investor news update the following day, stating that TNT’s worldwide operations were significantly affected and that the spread involved a virus distributed through a Ukrainian tax software product. Two major multinationals, two public statements, two vertebrae in the spine of a timeline that would eventually stretch across years.

Cisco Talos, one of several security research organizations publishing contemporaneous analyses, described the malware as a worldwide ransomware variant with worm-like spread dynamics. The technical community was already circling a suspicion that would harden over the following days: the malware’s behavior resembled sabotage more than extortion. WannaCry had primed the world to see “ransomware worm” and immediately reach for a familiar playbook.

NotPetya rode that reflex like a pickpocket working a distracted crowd.

Responders chased payment channels and decryptors. Executives assumed a business transaction existed somewhere behind the chaos. The window for containment shrank while debate continued over whether negotiation was even possible. The deeper tell was not the note on the screen; it was the mismatch between the story the malware performed and the physical experience inside organizations, where computers failed broadly, operations ground to a halt, and recovery began to look suspiciously like total rebuild.

A sharp OSINT detective watching the footage pauses here and notes that the victims were already running toward escapes that had been blocked before the show began. Therefore, he does not start by trying to figure out who did it. Without a lead, that way leads to speculation without evidence.

Rather, he builds a clean spine of time, with June 27 as the first vertebra:

- Widespread disruption

- Ransomware-like display

- Rapidly expanding scope

He resists the urge to narrate beyond what the evidence can hold. The same morning, a car bomb killed Colonel Maksym Shapoval, a senior Ukrainian military intelligence officer, in central Kyiv. Ukrainian authorities would later attribute his assassination to Russian intelligence, though the temporal coincidence with NotPetya remains unresolved in the public record.

The Carlin method therefore applies. Watch what passes across the frame, and refuse to fill gaps with speculation even if feels like memory.

By late June 27 and into June 28, the story had crossed into the corporate language of “significant impact.” Maersk’s update remained terse. FedEx, through TNT, was already pointing at a vector category: a software product used for compliance work in Ukraine. That divergence is instructive. Different organizations have different visibility, different legal constraints, different communications strategies. One company offers a plausible path of entry while another does not yet know its own path.

The self-taught detective learns to watch and analyze for himself how evidence accumulates unevenly, never forgetting that premature certainty is dangerous.

The U.S. government’s alerting infrastructure, then operating as US-CERT, described the campaign under “Petya Ransomware”. Crucially, it oriented defenders toward a mitigation and recovery posture rather than payment and decryption. The underlying framing—destruction disguised as ransomware—is the load-bearing insight that makes NotPetya historically distinct.

The Supply Chain as Entry Point

When people say “supply chain attack,” they imagine a compromised cloud library or a poisoned package in a public repository. NotPetya’s entry was a new species with a different method. Compromise a widely used software product in a specific geography, then use that trust relationship as a distribution channel. The target was M.E.Doc, a Ukrainian accounting and tax software application required for businesses operating in compliance with local regulations.

The attacker did not need to breach every victim individually, only to compromise the update mechanism of software that victims were already obligated to trust.

Cisco Talos’s “MeDoc Connection” writeup describes the attack as supply-chain focused, with the M.E.Doc software update mechanism used to deliver a destructive payload. FedEx’s investor disclosure independently aligns. TNT used the compromised tax software, and that use allowed the virus to infiltrate its systems. These two sources serve different roles in a casefile. Talos provides a technical narrative explaining what was observed. FedEx provides a business narrative explaining how a trusted product used in a local context became a path into a multinational’s operations.

Each source is a camera angle controlled by someone else, and the analyst earns credibility by demonstrating that multiple angles converge on the same scene.

Supply chain risk becomes viscerally real when you let it. A Danish shipping company with operations in Ukraine runs software mandated by Ukrainian tax authorities. A pharmaceutical giant with manufacturing facilities across continents depends on local systems that comply with local regulations. The compliance requirement is both the trust relationship and the attack surface. Supply chain risk is organizational geography made digital.

Ukraine in June 2017 was a country under pressure across multiple domains simultaneously—cyberattack, assassination, ongoing conflict in the east—and the supply chain compromise landed inside that larger pattern of coordinated stress.

The M.E.Doc compromise illuminates a category of dependency that enterprise risk frameworks often miss: mandatory software. Organizations can choose their cloud providers and negotiate with their operating system vendors. They cannot choose to ignore local tax compliance. The attacker selected a vector that victims could not simply uninstall or replace. The trust was not voluntary; it was regulatory. The analyst who watches long enough begins to see the organization as something more than a case study. The footage reveals how the victim lived before the attack, what dependencies shaped daily operations, and what assumptions were baked into the architecture of normalcy.

The supply chain was not merely exploited; it was selected. The attacker understood that certain software dependencies are stickier than others, and that compliance requirements create durable trust relationships. The magician chose an audience that could not leave the theater, then locked the doors before dimming the lights.

Building a casefile graph demands hard answers before drawing edges.

When the analyst adds “X caused Y” or “X is related to Y” in Maltego or any graph tool, he is asserting something. Graph work is seductive because it looks authoritative. Each edge is a surveillance angle, and too many analysts fill their screens with lines that feel like insight but function as decoration.

The following questions keep the graph honest:

- What is the claim, exactly? “Used by” and “caused” are different edges with different evidentiary requirements.

- What is the source type? Primary disclosure carries different weight than vendor report, journalism, or commentary.

- Is there independent corroboration? Another outlet, another document, another angle strengthens confidence.

- Does the claim survive a boring alternative? Coincidence, misreporting, and conflation must be ruled out before causation is asserted.

- What is the time anchor? The date of the event and the date of publication are different facts that require separate tracking.

- What is the harm of being wrong? Reputational damage, panic, and misdirected defenses are consequences of false edges.

NotPetya’s supply chain claim hardens over time precisely because it survives these questions.

Multiple independent sources, both technical and corporate, point to the same vector category. The complete internal compromise chain—how the vendor was breached—remains uncertain because the analyst lacks forensic access. The claim is actionable anyway. Disciplined confidence labeling separates what is verified from what is inferred from what remains unknown.

Propagation Collapses Decision Time

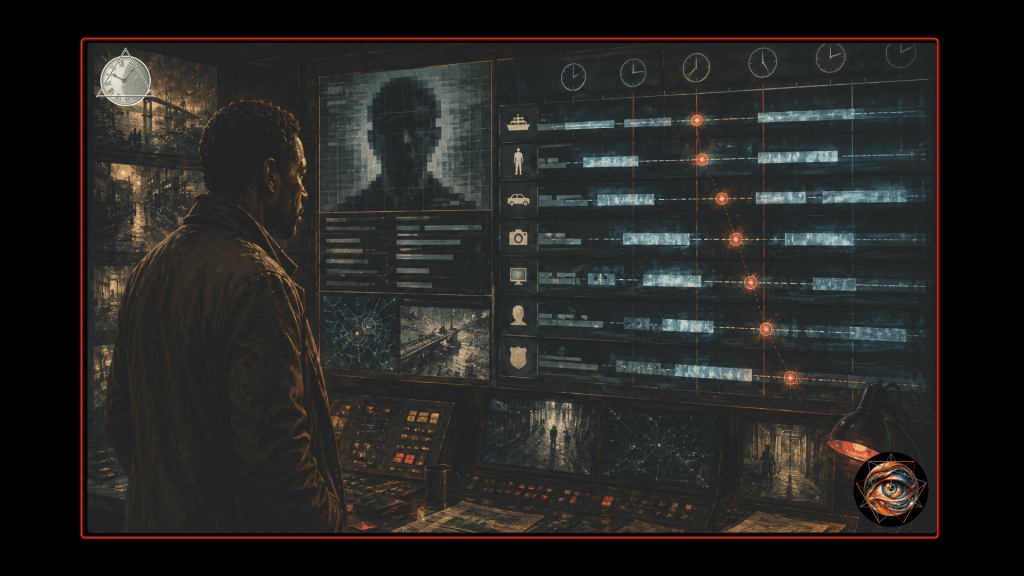

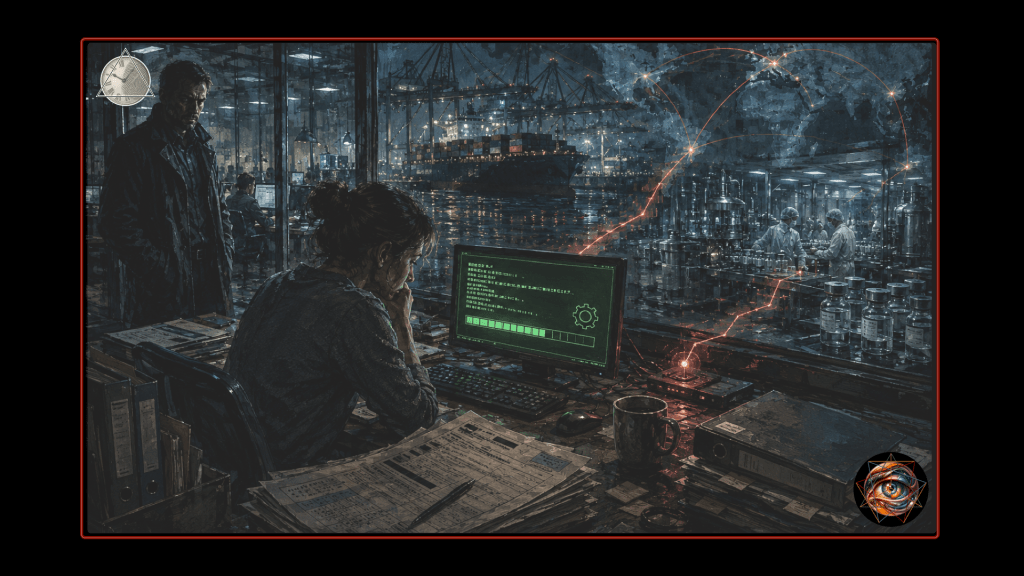

Inside many outbreaks, the difference between a bad day and a catastrophic week is lateral movement. How quickly can a compromise travel from one machine to a network where no one can authenticate anywhere? Early technical analysis noted that NotPetya used multiple mechanisms to spread, and that its behavior differed from malware that simply scans the internet randomly. It was fast, targeted, and ruthless in its exploitation of trust relationships within enterprise environments.

There are two safe points to extract for a public-facing writeup.

The first is that rapid internal spread collapses decision time; if a containment plan depends on a meeting, it will lose to malware that depends on seconds. The second is that identity services become a single point of failure, because large environments are glued together by authentication, authorization, and centralized management. When those services go down, “restoring from backup” transforms into “reconstructing how the organization knows itself.”

The footage from inside affected organizations, reconstructed from later accounts and filings, shows a particular kind of chaos. Machines rebooting simultaneously. Administrators locked out of their own consoles. Phone trees failing because the directory service was offline. The playbook assumed partial impairment, some systems down, others available for coordination. NotPetya delivered total impairment across trust boundaries that no one had mapped as attack surfaces.

The domain controllers that authenticated every employee became the first casualties, and without them, the organization forgot who its own people were.

A ransomware campaign that encrypts files on scattered endpoints is painful but recoverable. A wiper that destroys the identity infrastructure requires reconstruction from first principles. The analyst rewinding the footage sees the moment when the attack exceeded the design assumptions of every incident response plan it touched. The plans assumed the responders could still log in.

The Maersk recovery story has been described publicly in detail sufficient to serve as the human-scale thread that keeps a historical narrative from becoming sterile chronology. WIRED’s longform account describes Maersk leadership receiving a phone call in the early morning hours of June 27 and recounts a rebuild of approximately four thousand servers and forty-five thousand PCs over roughly ten days. The analyst treats this account as high-quality narrative reporting rather than a primary technical source; many of its claims require corroboration to reach full verification. The account remains valuable because it captures the operational reality that makes the lessons stick.

Claire Kuchever is already dead when Carlin begins watching her apartment through the surveillance window. He cannot save her; he can only understand her final hours well enough to recognize what killed her. Maersk occupies this position in the NotPetya narrative. The analyst watches Maersk’s systems go dark, reconstructs the infection sequence from public statements and later reporting, and develops an investment in the organization’s fate that exceeds professional detachment. The analyst studies how Maersk functioned, not just how Maersk was attacked.

When everyone is locked out at once, even documentation may be unreachable if it lives on the wrong system. Recovery is not merely a storage problem, but an identity problem. The organization must re-establish who is allowed to do what before it can restore the systems that depend on those permissions.

NotPetya forced many firms to discover which assumption was baked into their disaster recovery plans. If the plan assumed extortion, it optimized for backup restoration and negotiation containment. If it assumed destruction, it optimized for continuity of operations and identity-based rebuild under duress. The difference between those assumptions is the difference between a difficult quarter and an existential crisis.

FedEx published a TNT Express operations update on June 30 describing progress: remediating systems and methodically bringing business-critical services back online. The language is operational, almost boring, but that is what recovery looks like in real life. Merck’s manufacturing shutdown stretched nearly two weeks; a vaccine production facility went offline long enough that the company borrowed Gardasil doses from the U.S. strategic national stockpile to meet contractual obligations. Mondelēz, maker of Oreo and Cadbury, lost 1,700 servers and 24,000 laptops permanently. Systems can be online yet fragile—inventories, staged bring-up, cautious reintroduction of services.

The footage does not end when the fire trucks leave.

The Maersk recovery story includes a detail that has become legendary in security circles. The company’s entire Active Directory infrastructure was wiped, and the only surviving domain controller was located in Ghana, where a power outage had taken the server offline before the malware could reach it. That single server, preserved by accident rather than design, became the seed from which the entire identity infrastructure was rebuilt. The next organization may not be so fortunate.

Institutional Delay

One way popular accounts fail is by treating dollar figures like plot twists. In a defensible narrative, money is evidence of impact, but it is also noisy. Companies report different slices of loss in different time windows with different incentives. The analyst must read filings carefully enough to understand what is actually being claimed.

Merck’s Form 10-K disclosures serve as a model of what a primary source can provide. The 2018 filing, discussing the 2017 attack, describes a network cyber attack that disrupted worldwide operations including manufacturing, affecting 2017 sales by approximately two hundred sixty million dollars. The filing reports aggregated costs of two hundred eighty-five million dollars in 2017, net of insurance recoveries of approximately forty-five million dollars, and notes an additional 2018 sales impact of roughly one hundred fifty million dollars due to residual backlog. Merck’s insurers invoked war exclusion clauses, arguing that a Russian state act of sabotage fell outside standard all-risk coverage. The dispute reached $1.4 billion; Merck won a $700 million judgment in New Jersey before the case settled confidentially in early 2024, nearly seven years after the screens went dark.

These disclosures reveal what “impact” means inside a mature enterprise—revenue disruption, manufacturing variance, remediation cost, opportunity cost, insurance friction, and multi-year tail effects. The analyst stops chasing “the” number and starts naming categories. Aggregate damage estimates that place total NotPetya losses in the ten billion dollar range combine disclosed losses from public companies with estimated losses from private organizations and second-order supply chain effects. The analyst can cite such estimates while flagging their methodological limitations.

The insurance disputes deserve particular attention because they illustrate how incident consequences propagate through institutional systems long after the technical recovery is complete. The legal question at stake—whether a state-attributed cyber attack triggers war exclusions in commercial policies—had never been tested at this scale. NotPetya became the test case that insurers, policyholders, and courts would argue over for years. The incident-day footage is only the first reel

The courtroom footage fills additional volumes released on a slower clock.

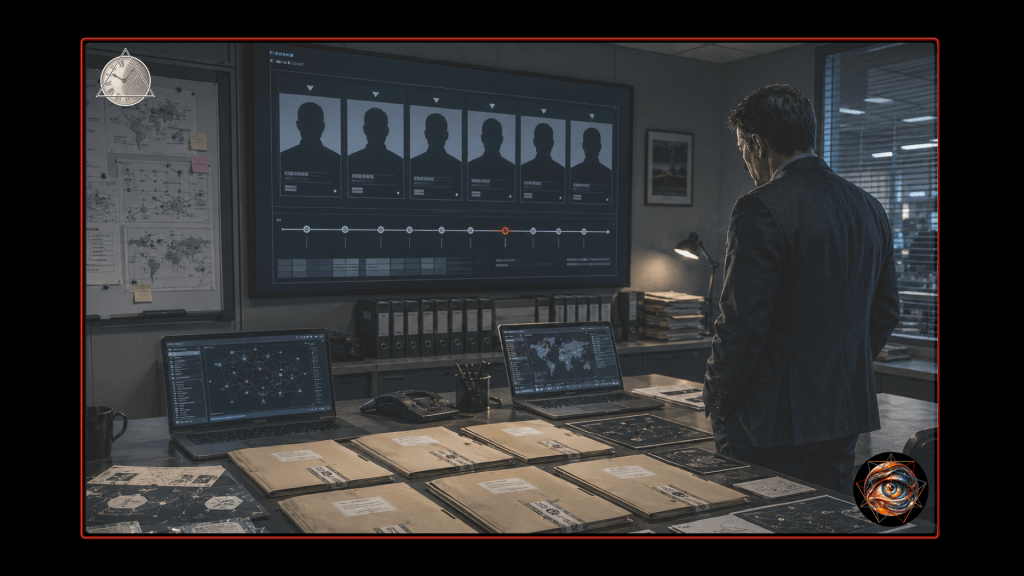

The attribution that transformed NotPetya from an IT incident into a geopolitical event arrived months after the outbreak itself. On February 15, 2018, the U.S. White House issued a statement attributing the June 2017 NotPetya attack to the Russian military, describing it as the most destructive and costly cyber attack in history at that time. The UK government issued a parallel statement. Eight months elapsed between the outbreak and the public attribution—a delay shaped by diplomatic considerations, declassification constraints, and the slow clock of policy consensus.

Government statements are not raw intelligence, but policy artifacts released through institutional filters like surveillance footage that has passed through an editing bay before reaching the analyst’s screen. The analyst can verify that these governments publicly attributed responsibility on that date, and even assess that the attribution aligns with broad technical consensus and later legal actions. He cannot, however, verify the complete classified evidence base.

The correct stance is to separate these confidence levels explicitly:

- Verified: these governments publicly attributed responsibility on that date, and the statements exist as primary artifacts.

- Partially verified: the attribution aligns with technical reporting, multiple allied government statements, and subsequent prosecutorial actions.

- Unverified for civilians: the complete classified evidence base and internal deliberations behind those statements.

Readers do not need the analyst to be omniscient, only to be consistent about what counts as evidence.

The final major beat in a historical NotPetya timeline is not another outage day, but the slow clock of prosecution. On October 19, 2020, the U.S. Department of Justice announced charges against six Russian GRU officers, connecting them to the deployment of destructive malware and other disruptive actions in cyberspace. NotPetya was included as part of the referenced campaigns. The GRU officers later charged with NotPetya operated within an intelligence apparatus that Ukraine also held responsible for the Shapoval assassination.

Whether the operations were related, parallel, or merely concurrent remains outside civilian verification.

The bomber in Déjà Vu, Carroll Oerstadt, provides the puzzle Carlin must solve. Oerstadt times his attack for maximum casualties, eliminates witnesses on schedule, and exploits gaps in surveillance coverage. The GRU operators behind NotPetya occupy the same structural position. The analyst cannot interview them, only infer their methods from the shrapnel pattern. The supply chain vector, the ransomware disguise, the propagation speed—each is a clue to the attacker’s temporal intelligence.

The attacker understood that the defender’s decision tempo is slower than the malware’s propagation tempo, and the attack was timed for the gap between update and detection, between detection and decision, between decision and containment.

The malware moved in seconds. The defender’s decisions moved in hours. The attribution moved in months. The prosecution moved in years. Each layer of the investigation operates on a different clock, and the intervals between those clocks are where meaning hides.

History Becomes Safeguard

A narrative about a historical incident earns its space when it produces a reader’s upgraded mental model. The NIST Cybersecurity Framework, with its five core functions of Identify, Protect, Detect, Respond, and Recover, provides scaffolding for that translation.

The Identify function is not about knowing assets in the abstract. It is about knowing which business-critical processes depend on which software supply chains, including regional compliance tooling. FedEx’s disclosure about TNT’s use of a local tax software product is a concrete example of how a subsidiary’s software reality can become a group-wide risk.

Protect and Detect require reframing after NotPetya. Protection is not only about keeping malware out; protection is about preventing systemic collapse once something is in. Detection that depends on someone noticing will lose to malware that moves faster than human attention. The goal is to shorten the time from anomaly to action, not to fetishize tooling.

The Respond function is where the narrative necessarily becomes human. A plan that assumes partial impairment fails when authentication fails everywhere. Response includes executive decision-making, communications, and continuity workarounds, not just technical containment. The meeting cannot happen if no one can log in to schedule it. Out-of-band communication channels, contact lists stored on personal devices, and manual authorization workflows became the lifelines that kept organizations functional while primary systems remained dark.

The Recover function is the part that NotPetya made newly visible to non-technical people. An organization cannot restore files into an environment that cannot authenticate, authorize, or coordinate. The organizational nervous system must be rebuilt before the limbs can move again.

The analyst who builds a casefile from historical materials is not writing a thriller about bad actors, but a case study about systems. The following rules keep it honest under pressure:

- Scope first: decide what you will not do. No targeting private individuals, no live tracking, no “find the hacker” as an objective.

- Two sources per load-bearing claim: if one source carries the story, you do not have a story yet.

- Separate what happened from why it happened: causes are always higher-uncertainty than timelines.

- Prefer primary artifacts: filings, official statements, and vendor reports before commentary.

- Preserve reproducibility: dates, document titles, and quotes short enough to be lawful and checkable.

- Minimize harm: redact personal data, avoid operationally sensitive detail, and treat “interesting” as a risk factor rather than a justification.

The event forces decisions early. The analyst must choose between writing a thriller about villains and writing a case study about how trust fails systemically. The thriller satisfies curiosity. The case study develops competence.

Only one of them enables you to investigate the next incident.

The Fracture Transforms

June 27, 2017 was already a day of fractured attention. The Supreme Court agreed to hear the challenge to President Trump’s revised travel ban and allowed the policy to take effect against foreign nationals who lacked a bona fide relationship with a person or entity in the United States. The Congressional Budget Office said the Senate Republican health-care bill would leave 22 million more people uninsured by 2026, with 15 million more uninsured in the first year after enactment. In Venezuela, a stolen police helicopter flew over Caracas, fired on the Interior Ministry, and dropped grenades at the Supreme Tribunal of Justice. NotPetya entered a news cycle saturated with competing shocks.

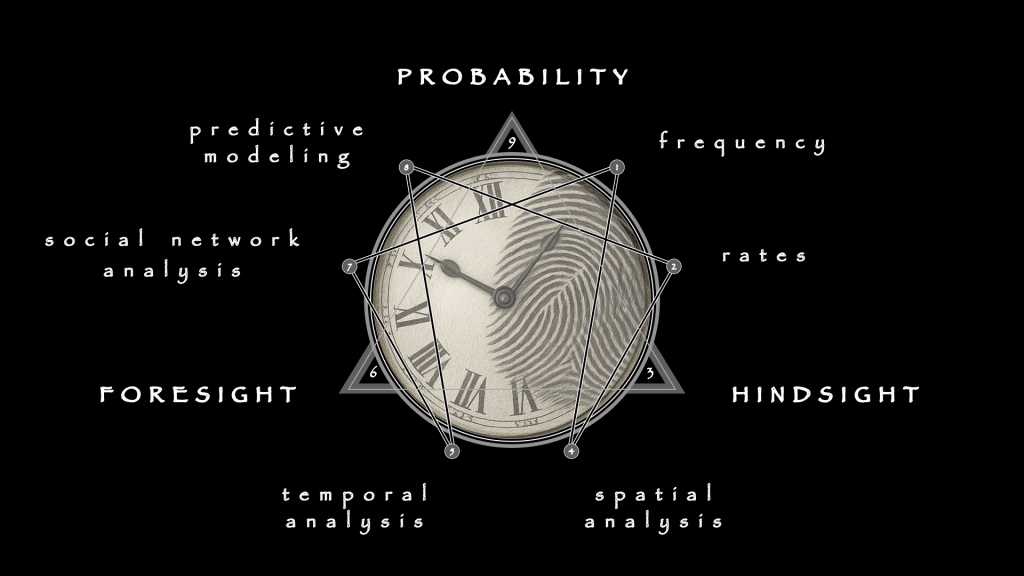

The reader who has followed this narrative from that day through to the DOJ indictment in October 2020 has watched the event unfold across four clocks:

- The malware’s seconds

- The defender’s hours

- The attributor’s months

- The prosecutor’s years

The central argument emerges from that temporal architecture. NotPetya’s true lesson is not a specific vulnerability or malware trick. The lesson is that modern organizations are held together by trust, and trust fails faster than institutions can respond.

There is a another lesson that only becomes visible on rewatch, when you rewind the footage and look anew with different eyes. No crime happens outside time, and time is rarely treated as anything more than a neutral marker stamped for administrative convenience. Investigators scour footage, interview witnesses, and assemble data mosaics with forensic discipline, but if they treat temporal information as passive context rather than strategic signal, they create a blind spot within which entire patterns of threat may go undetected.

Time is not merely the stage but a co-conspirator, and the attack was choreographed rather than chaotic.

The first viewing reveals the trick. The second viewing reveals the audience. The third viewing reveals the tempo: when the doors were locked, when the smoke machines started, when the exits became impassable. NotPetya is useful for OSINT training because it teaches the analyst to read time as architecture, to see silence as signal, and to recognize that the intervals between events are as deliberate as the events themselves.

The self-taught analyst lacks subpoena power and classified intelligence. The analyst possesses something more portable: the discipline to label confidence, the patience to triangulate sources, and the willingness to say “I cannot independently verify this” without treating uncertainty as failure. The footage is incomplete. The footage was always going to be incomplete. The analyst’s job is not to fill the gaps with narrative that feels like knowledge. The analyst’s job is to map the gaps accurately enough that the next viewer knows where to look, and when.

Doug Carlin, watching his surveillance footage four days delayed, eventually finds a way to intervene in the past. The film grants him a power that real investigators do not possess. The OSINT analyst cannot change what happened. He cannot warn Maersk before June 27 or patch the M.E.Doc update server before the payload deployed. The casefile is not merely a record of what happened but an intervention in what happens next. Where Carlin found a way to reach backward, the analyst reaches forward.

The method is the same: watch the footage carefully enough to recognize the tempo, document what the previous viewers missed … and trust that someone will be watching when the next show begins. The theater has burned. The next production is already being staged somewhere, in a venue the audience has not yet identified, with props they have been trained to trust, on a schedule the defenders have not yet learned to read.

The tempo will rhyme.

Leave a comment